Ye Zhu

Monge Tenure-Track Assistant Professor

Department of Computer Science

Laboratoire d'Informatique, LIX

École Polytechnique, Palaiseau, France

Email: ye[dot]zhu[at]polytechnique[dot]edu

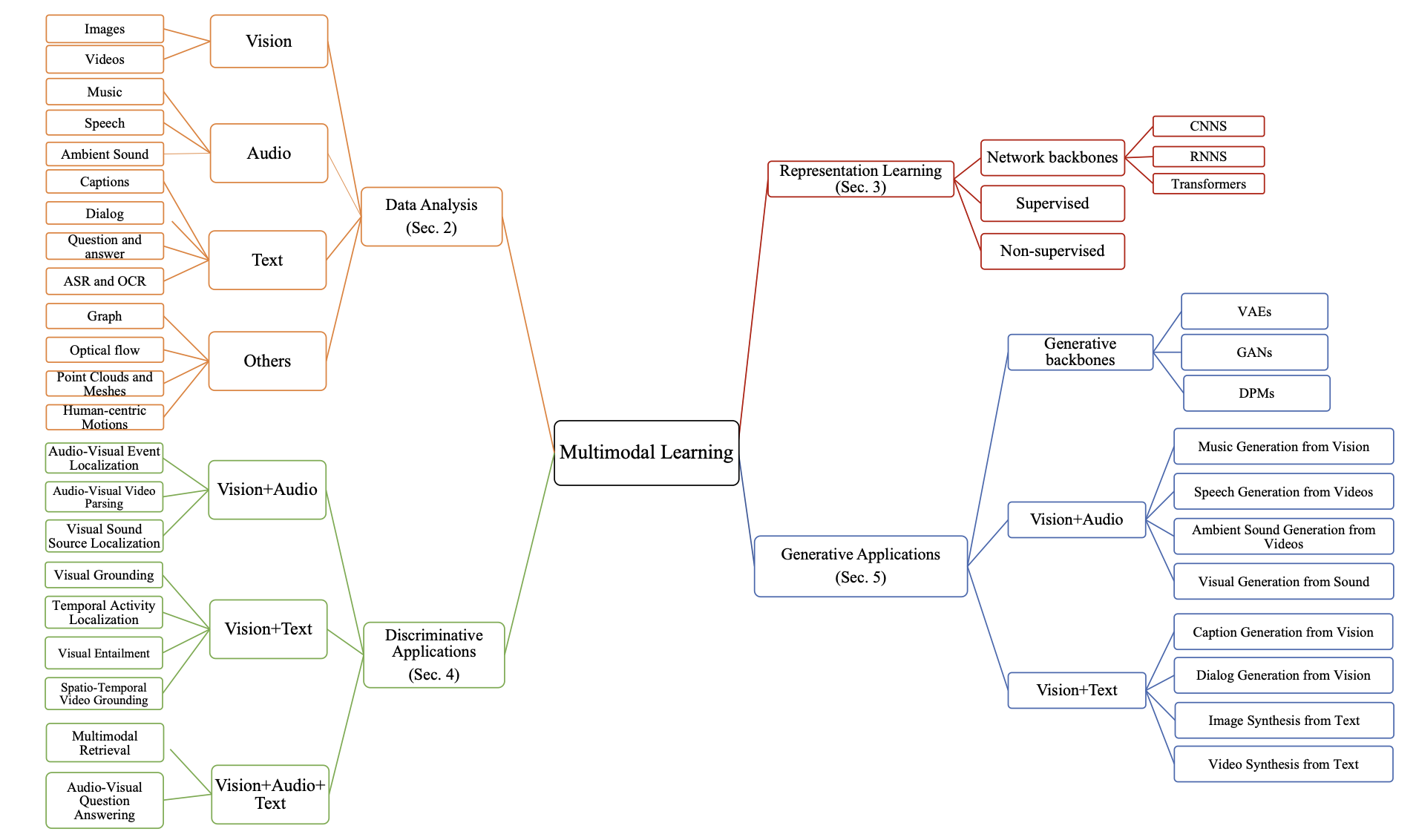

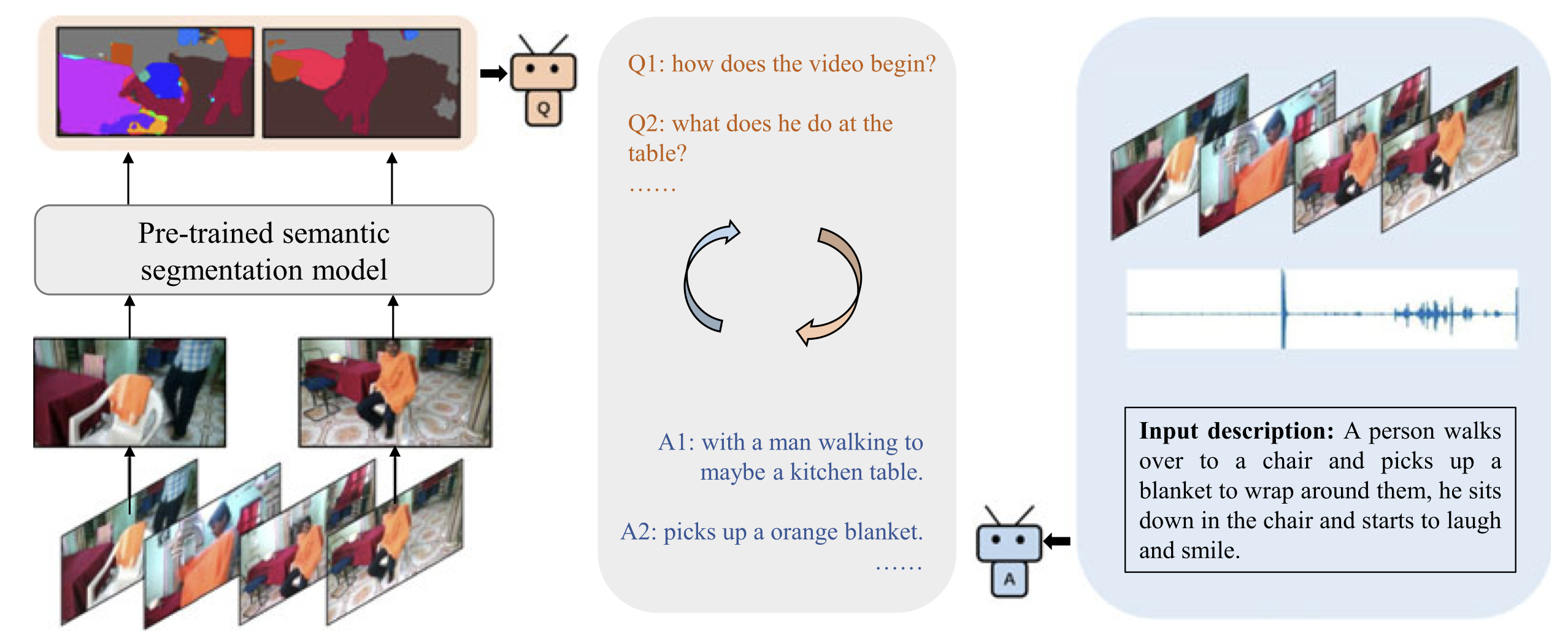

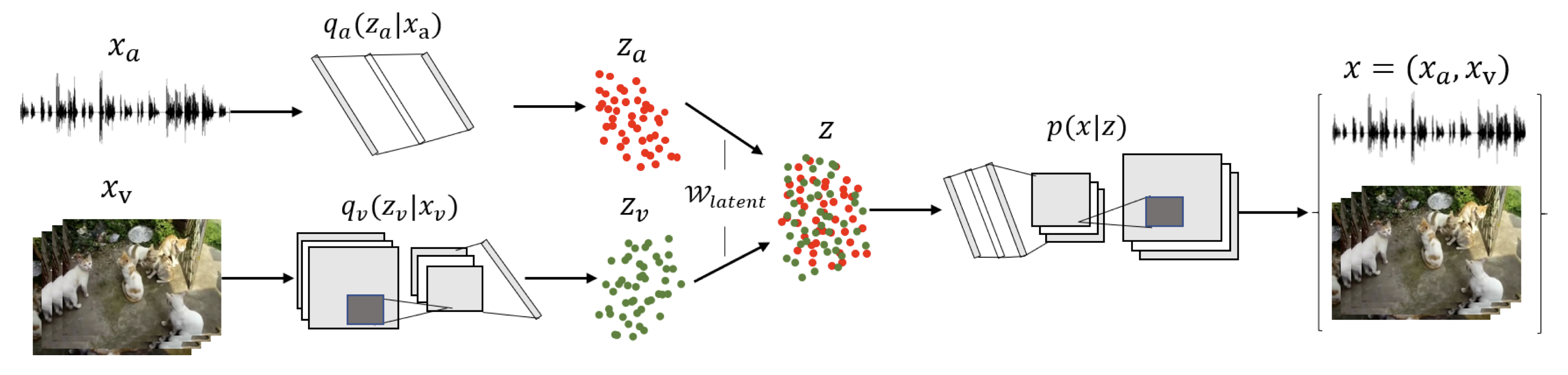

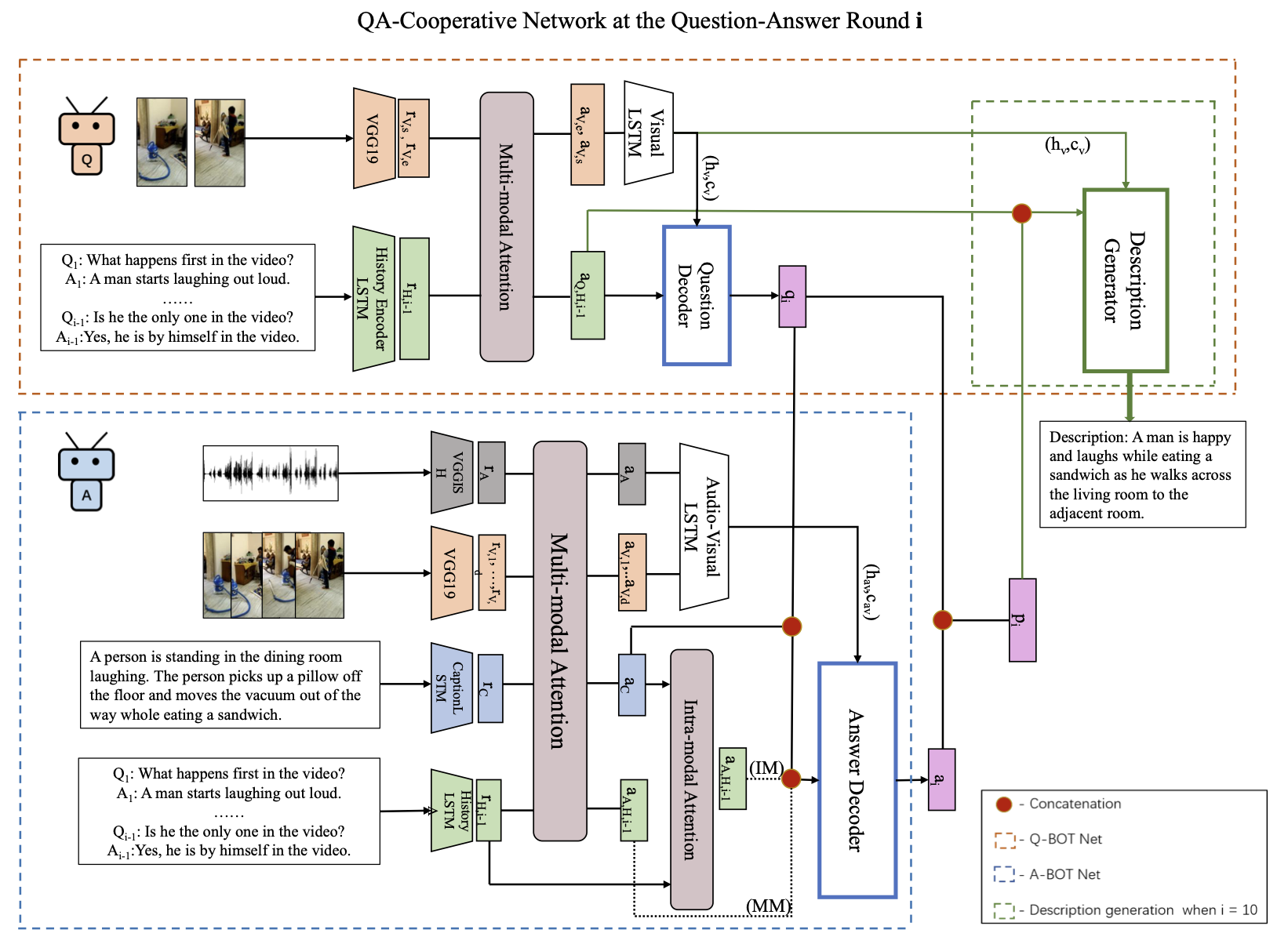

I am a Monge tenure-track assistant professor in the Department of Computer Science, and a Principal Investigator (PI) at the Computer Science Laboratory (Laboratoire d'Informatique, LIX) of École Polytechnique. I am also an ELLIS member and Hi!Paris Chair. My research lies in Machine Learning and Computer Vision, with a particular focus on dynamic generative models (e.g., diffusion models) and their applications in multimodal settings (e.g., vision, audio, and text) as well as in physics (e.g., astrophysical inversions). In recent years, much of my research has focused on understanding generative dynamics from both trajectory and latent-space perspectives, and on developing novel sampling techniques to enable fine-grained, multimodal, and theoretically principled control.

Before joining l'X in 2025, I spent two years as a postdoctoral researcher at VisualAI lab, Princeton University, working with Prof. Olga Russakovsky. I earned my Ph.D. in Computer Science under the supervision of Prof. Yan Yan at Illinois Tech in Chicago. I also hold M.S. and B.S. degrees from Shanghai Jiao Tong University (SJTU), and received the French engineering diploma through the dual-degree program with SJTU after studying at École Polytechnique.

I was fortunate to receive several awards and recognitions from the community, such as the ACM Women Scholarship the MIT EECS Rising Stars Award in 2024.

📬 For students and external collaborators with questions about my availability, please see the Contact.

News

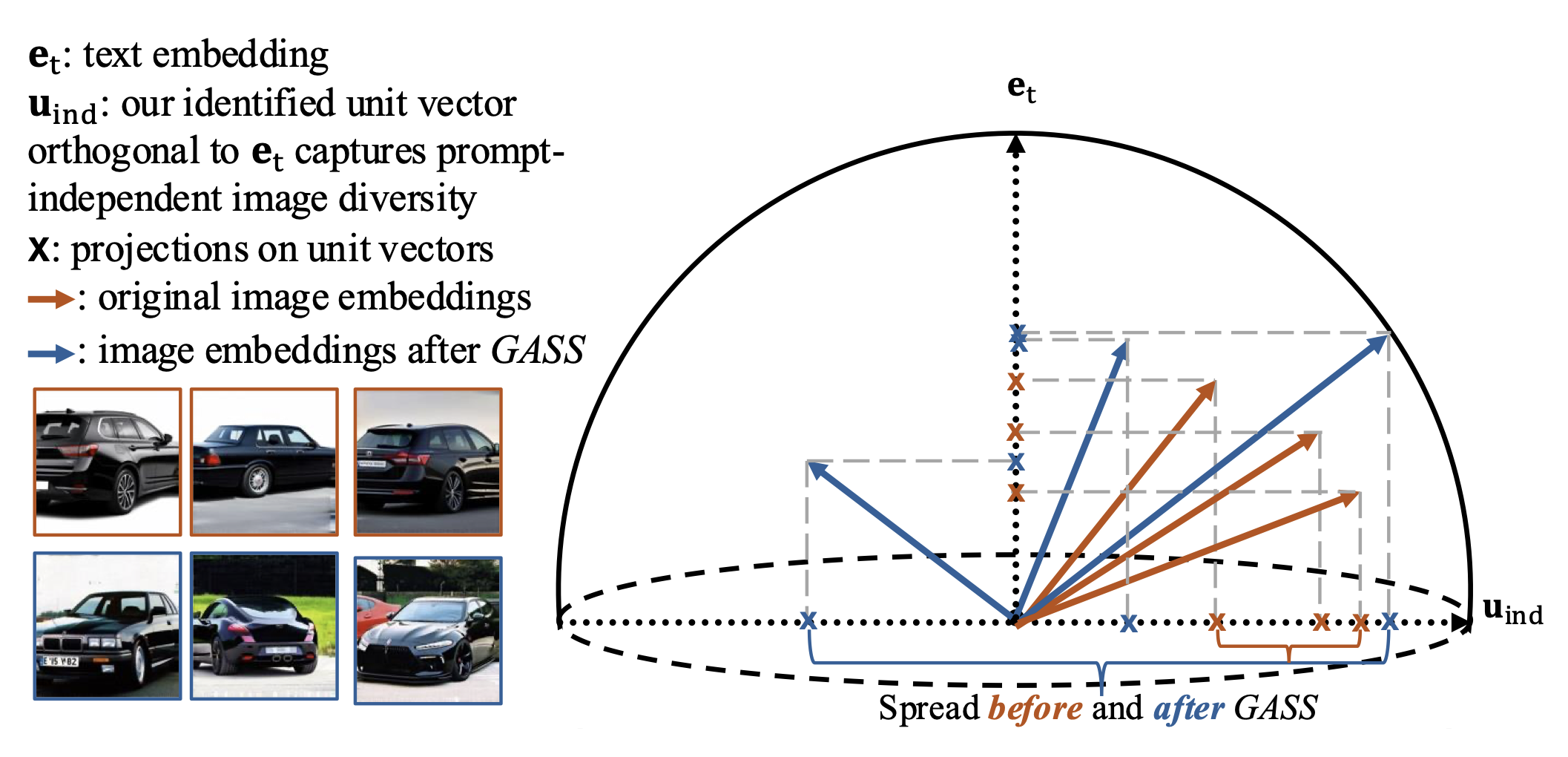

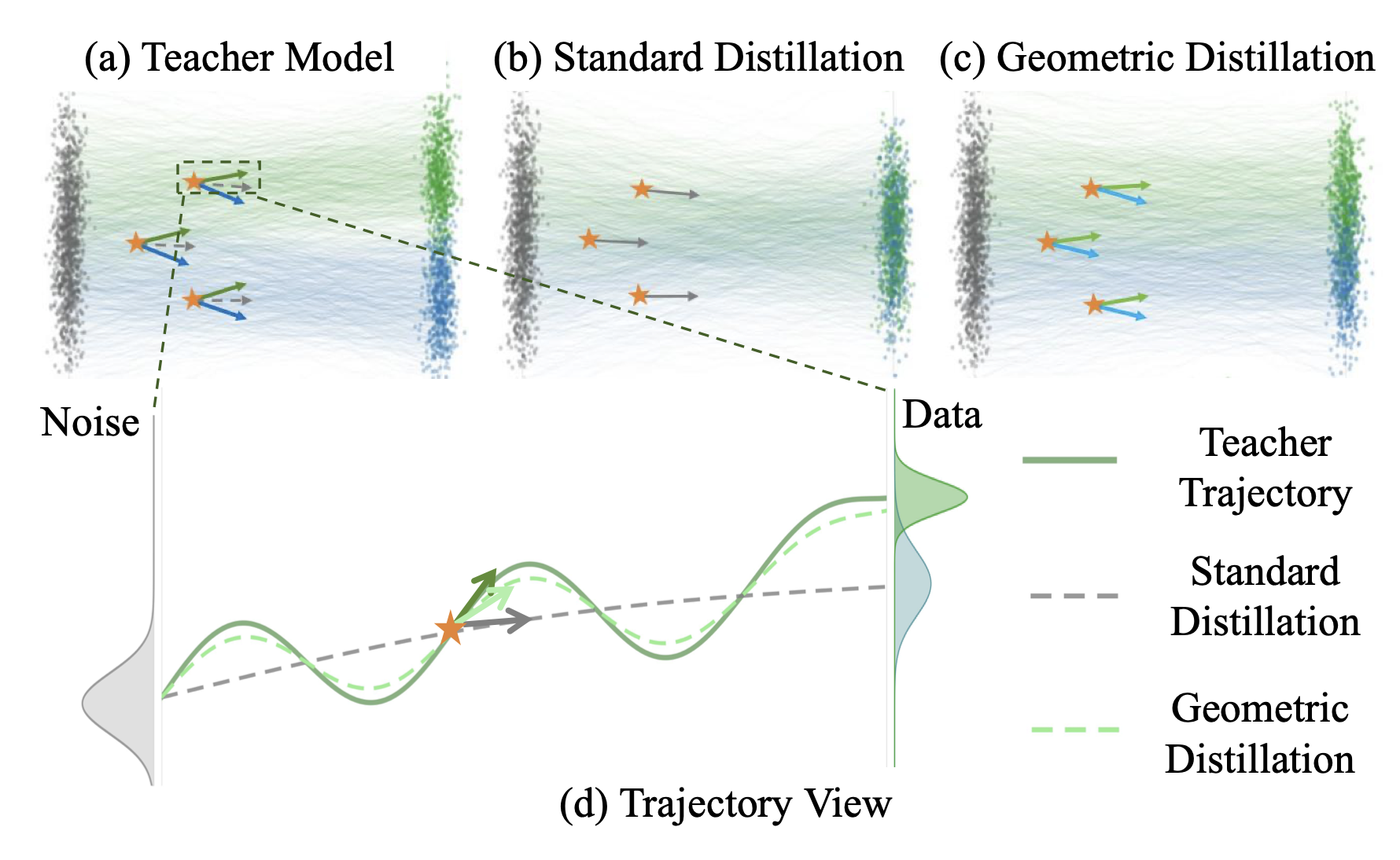

05/2026: Two papers on Geometry-Aware Text-to-Image Generative Distillation and Diverse Sampling accepted to ICML 2026.

04/2026: Received the Hi!Paris Starting Career Chair as the leading-PI.

01/2026: We are organizing the 3rd CV4Science workshop at CVPR 2026, join us in June!

12/2025: I gave several seminar talks on the topic of Dynamic and Structural Sampling for Interpretable Control in Multimodal Generation at LIX. [Talk Slides]

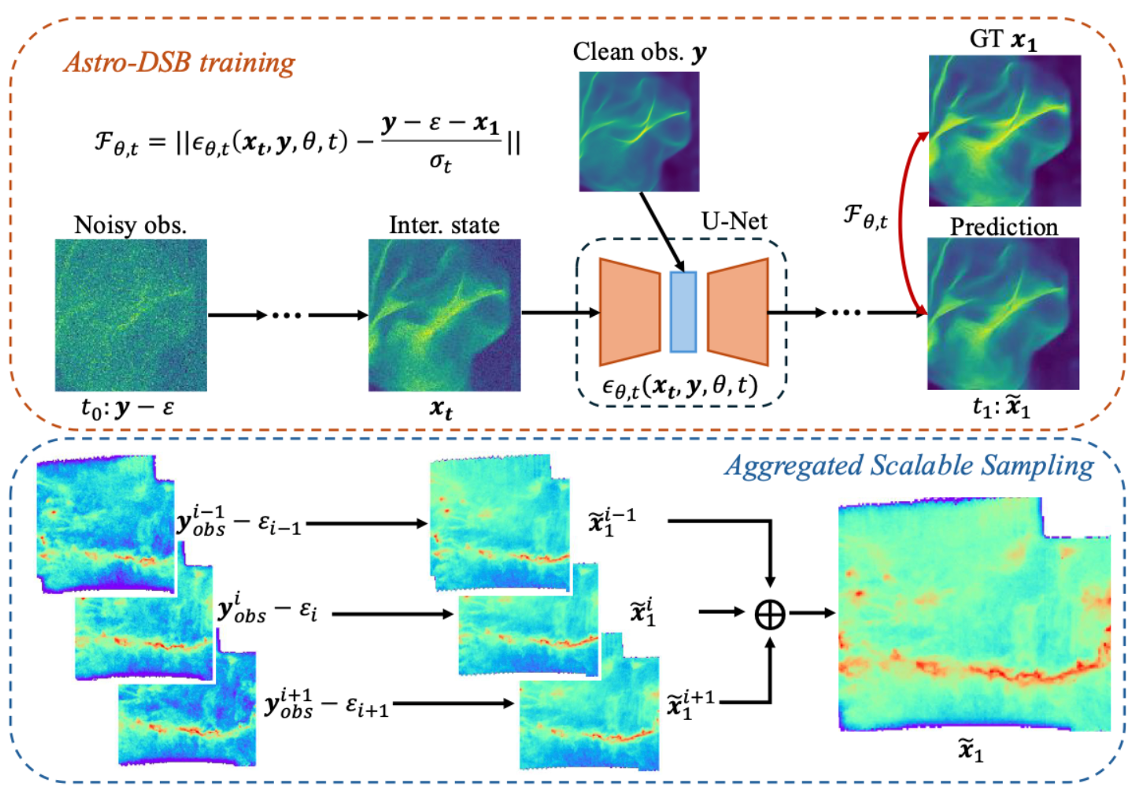

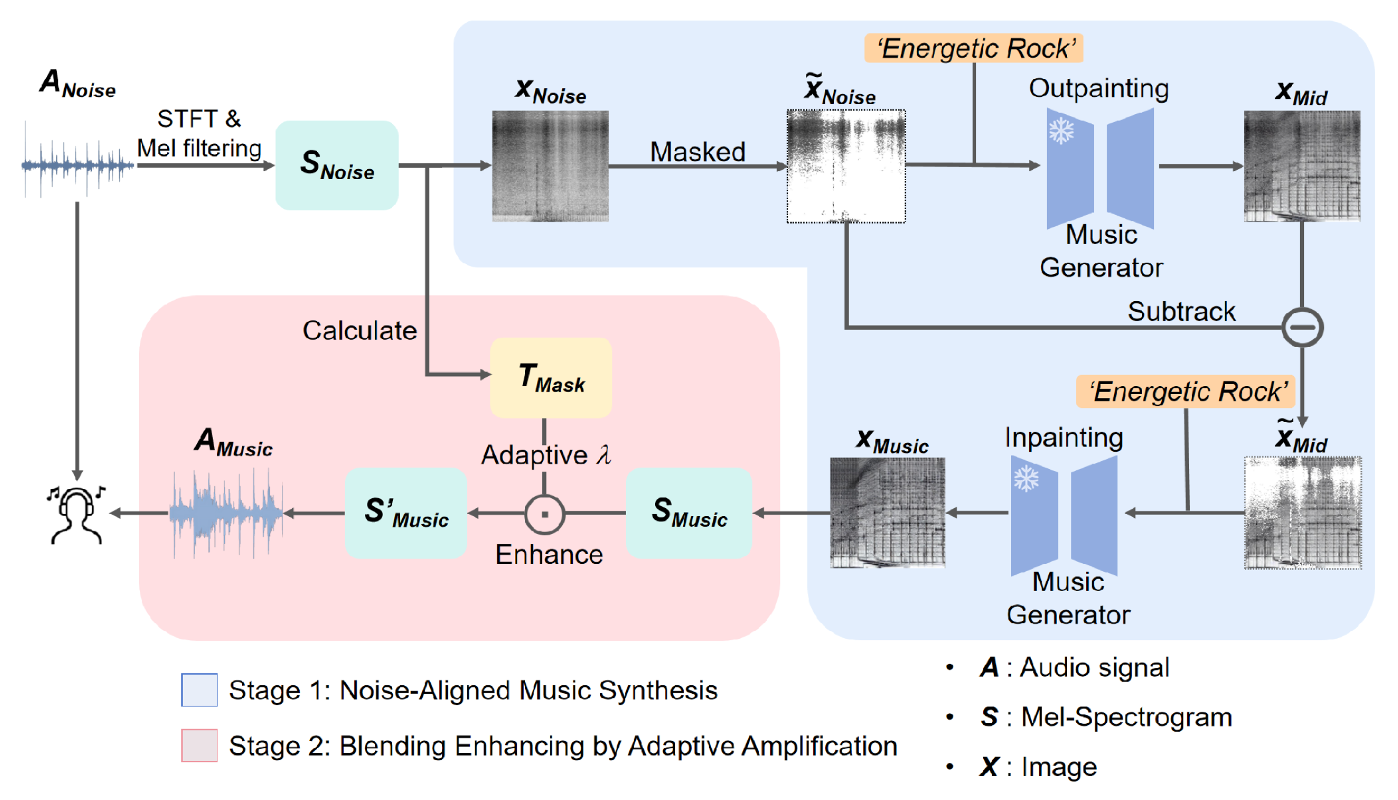

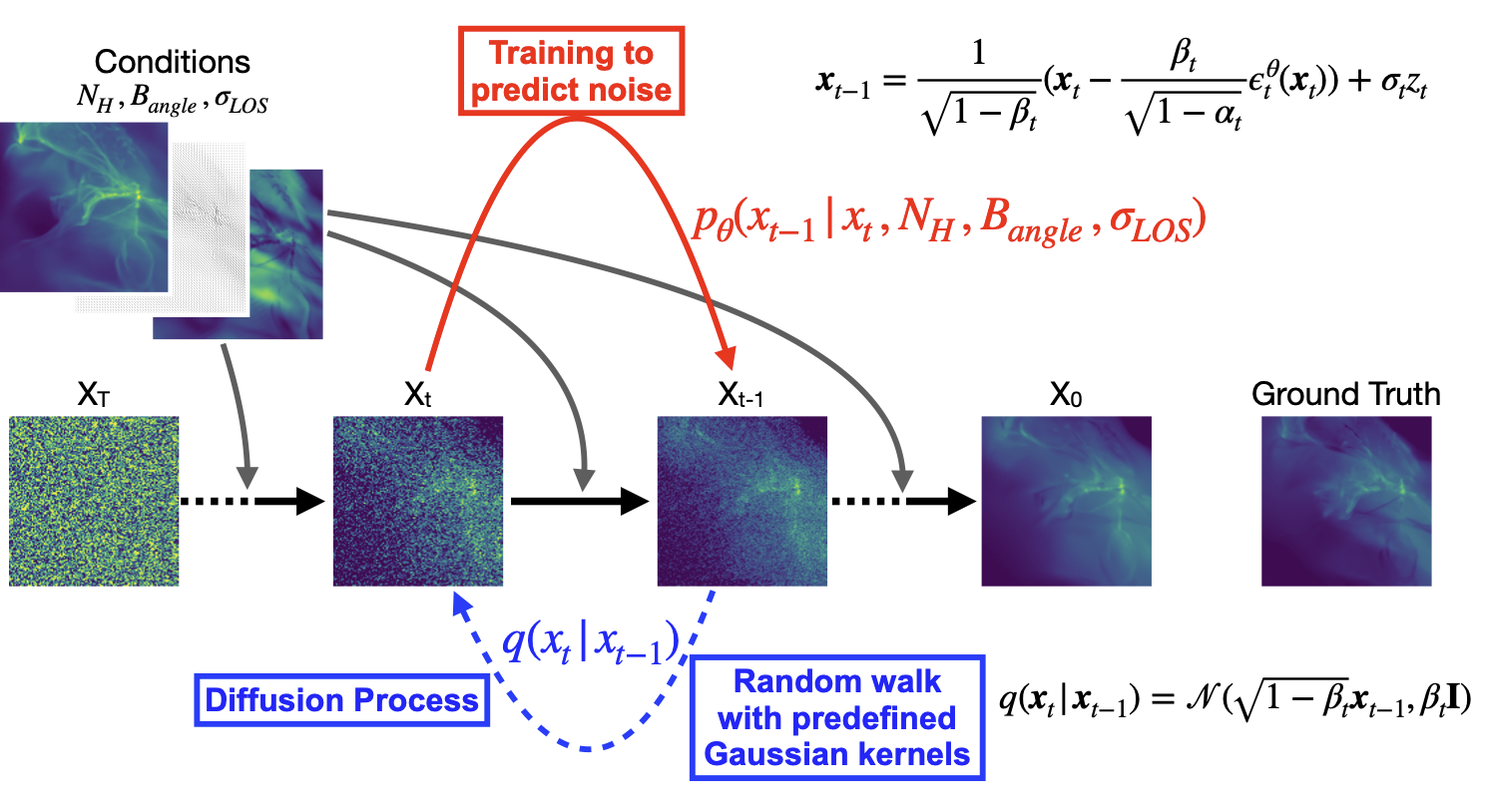

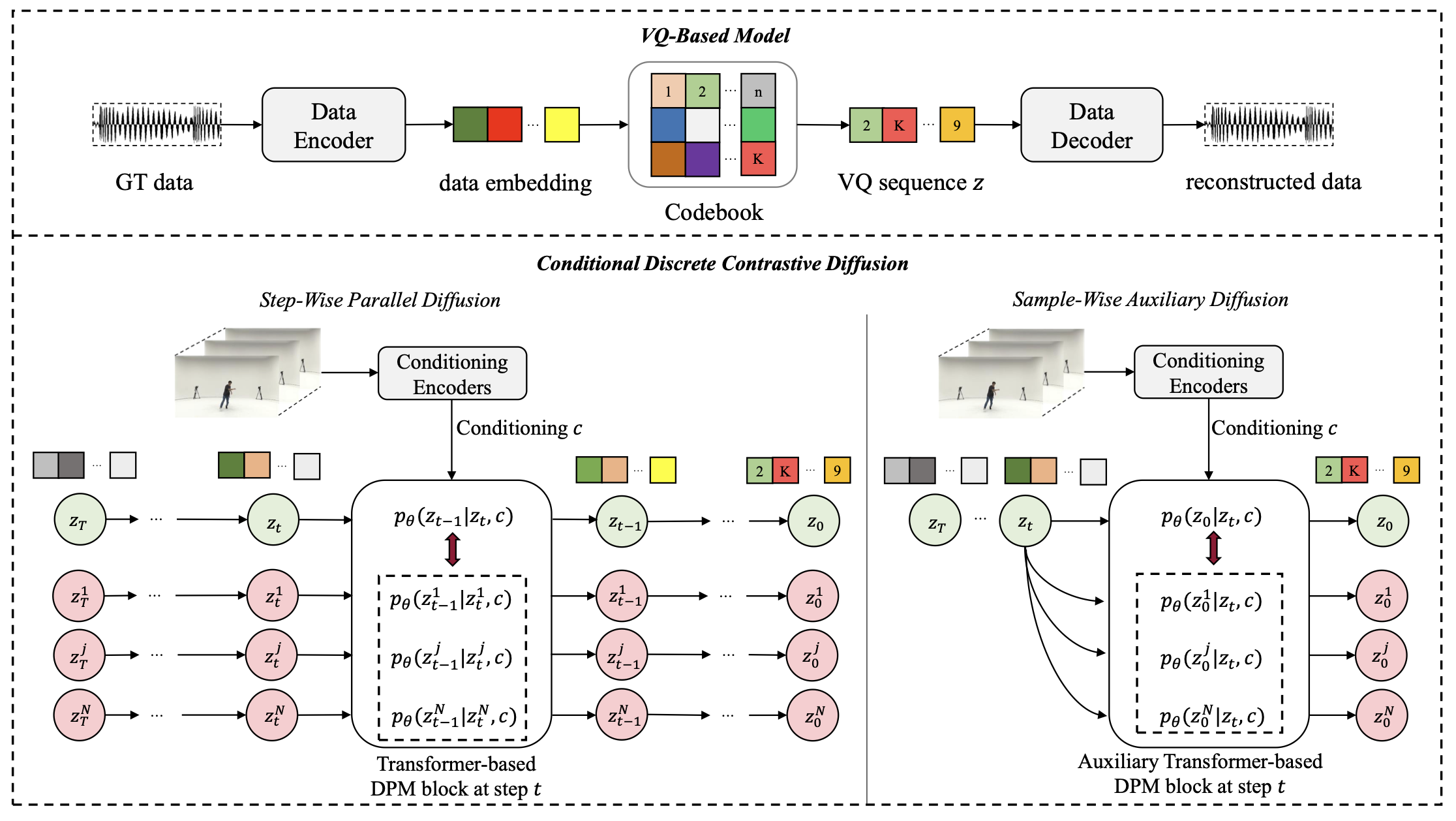

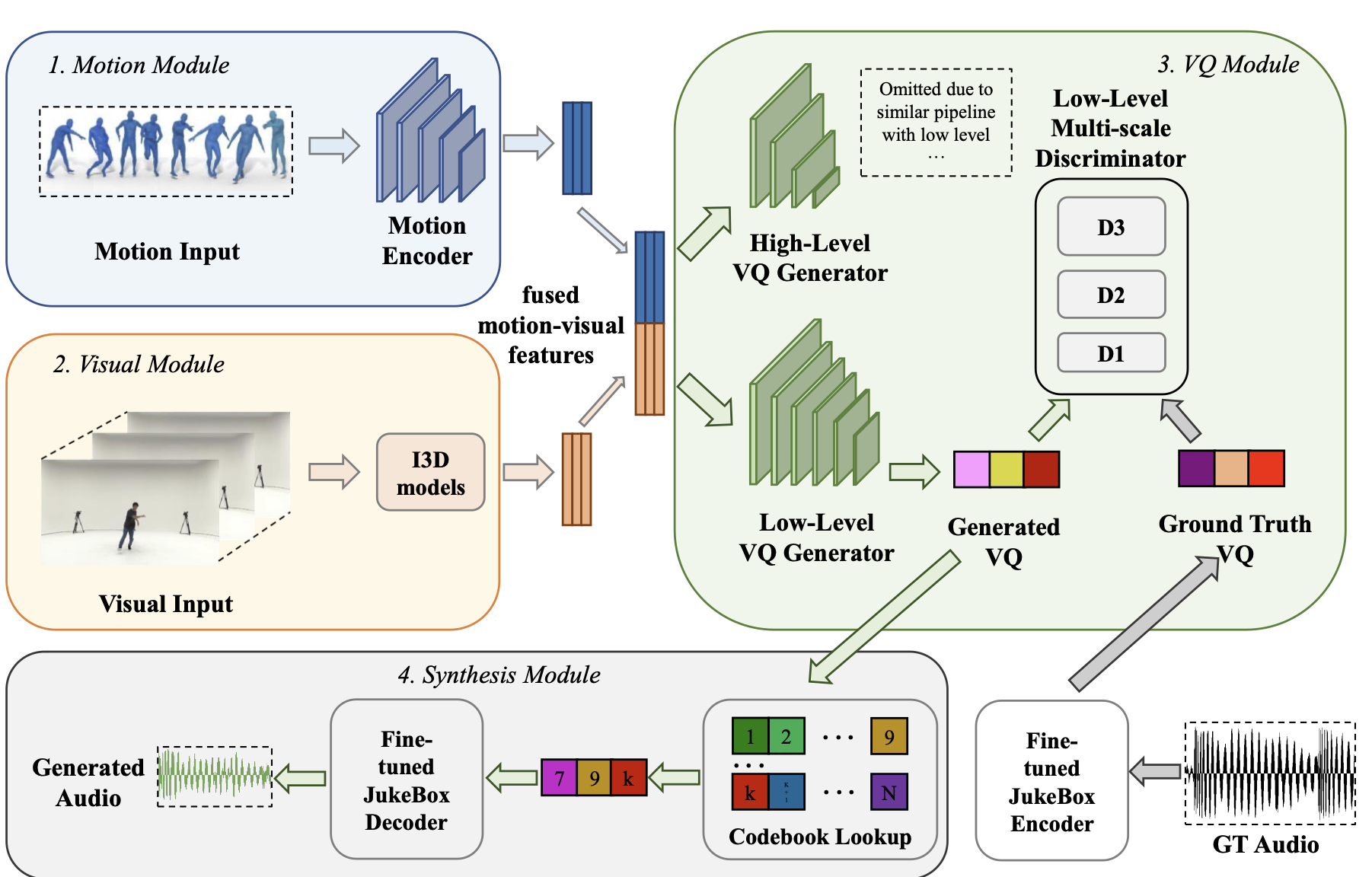

09/2025: Our works Dynamic diffusion Schrödinger bridge for astrophysical inversions and BNMusic for noise acoustic masking via personalized music generation accepted to NeurIPS 2025.

09/2025: I joined École Polytechnique as a Monge tenure-track assistant professor in Computer Science.

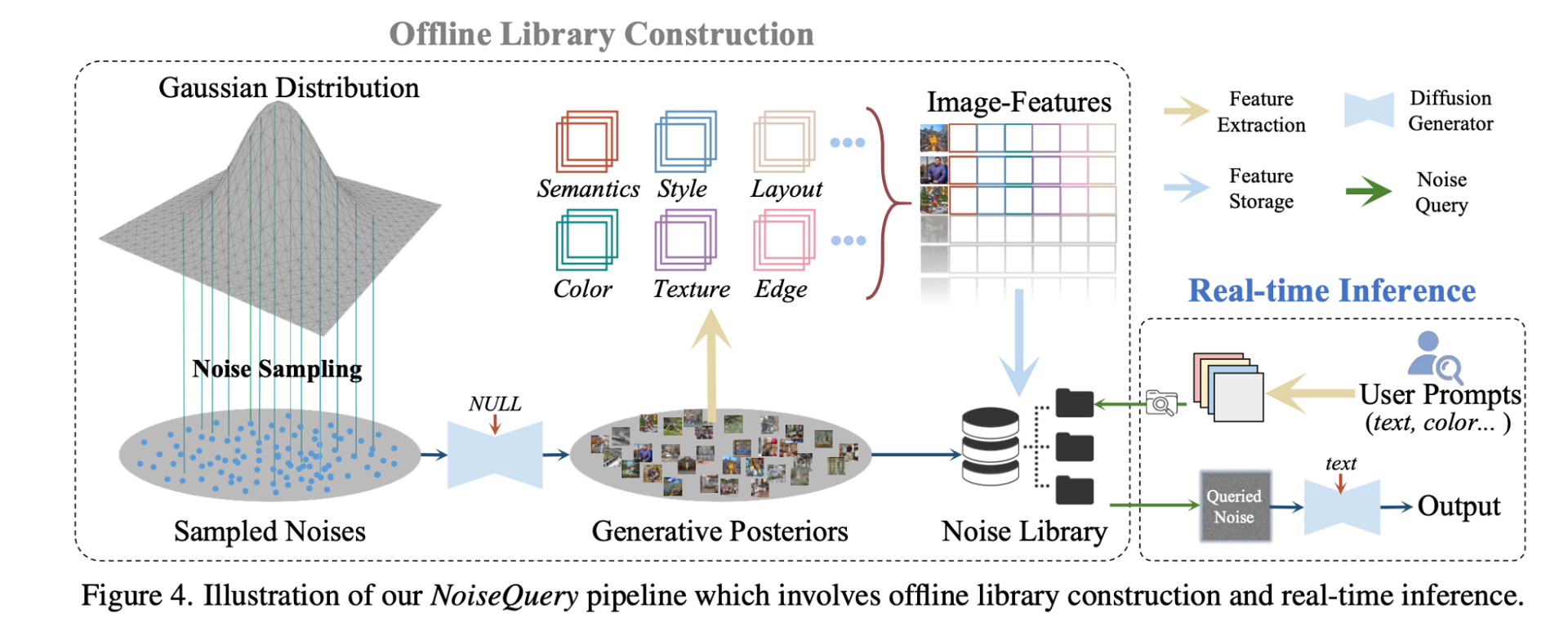

07/2025: Our work NoiseQuery for enhanced goal driven image generation accepted to ICCV 2025 as a Highlight paper.

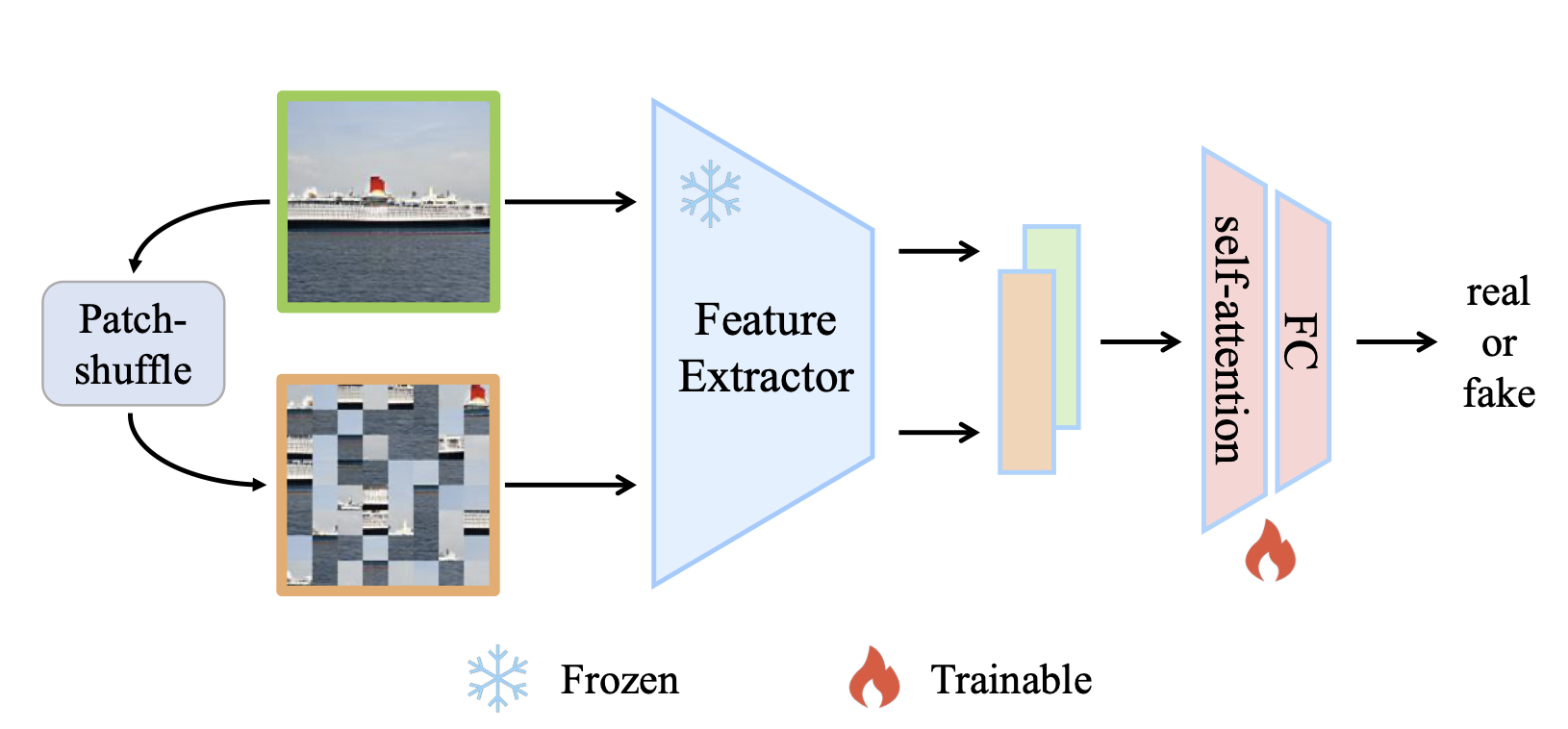

02/2025: Our work D3 for scaling up deepfake detection accepted to CVPR 2025.

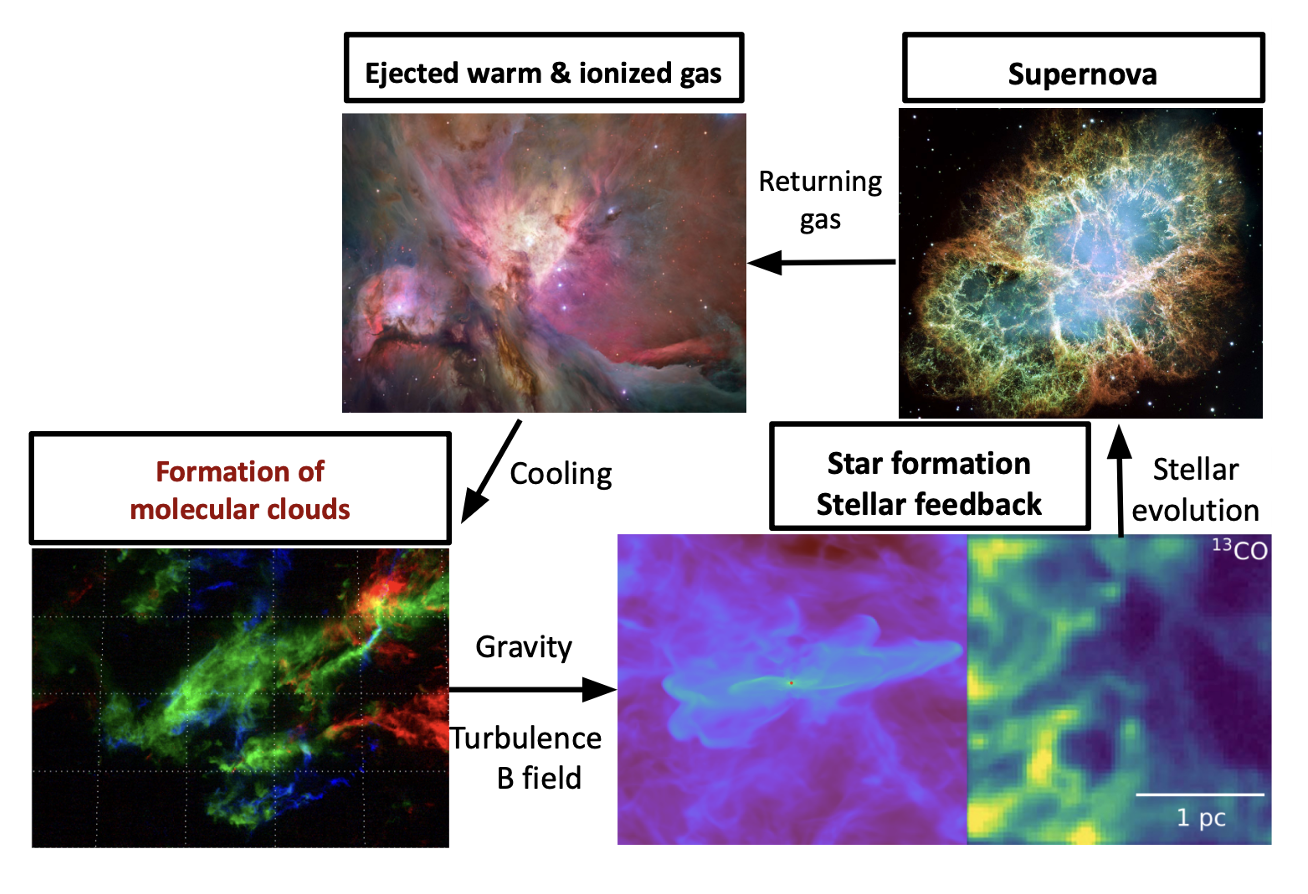

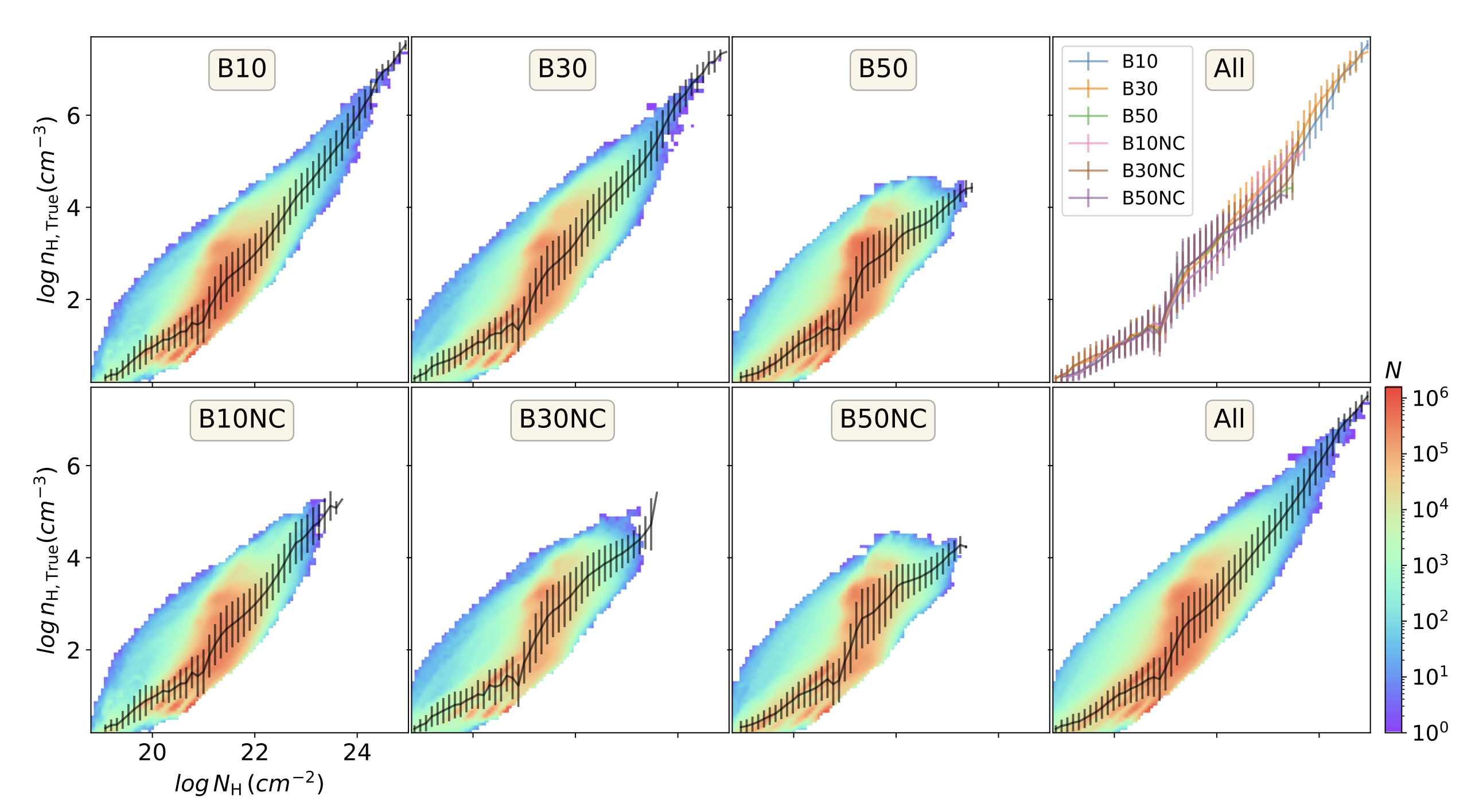

01/2025: Our work on exploring magnetific field in the interstellar medium via diffusion generative models accepted to The Astrophysical Journal (ApJ).

Selected Publications

* for equal contributions. A complete list can be found from Google Scholar.

Ye Zhu, Kaleb S. Newman, Johannes F. Lutzeyer, Adriana Romero-Soriano, Michal Drozdzal, Olga Russakovsky.

In Forty-Third International Conference on Machine Learning (ICML), 2026.

Students

Ph.D. Students:

Enzo Pinchon (Co-supervise with Prof. Johannes Lutzeyer): Ph.D. student from 09/2026.Achilleas Tsimichodimos (Co-supervise with Prof. Michalis Vazirgiannis): Ph.D. student from 01/2026.

Research Interns:

Sheng Wan (French Engineering Student at X)Teaching

Spring 2026- CSC_43042_EP: Algorithmes pour l'analyse de données en Python, École Polytechnique, France

CSC_52087_EP: Advanced Deep Learning, École Polytechnique, France

Deep Learning, SPEIT, Shanghai Jiao Tong University (SJTU), China

- CSC_51054_EP: Deep Learning, École Polytechnique, France

- COS429: Computer Vision, Guest Lecture on Generative Models, Princeton University, USA

Recent Talks and Outreach

12/2025: Talks on Dynamic and Structural Sampling for Interpretable Control in Multimodal Generation at LIX, Ecole Polytechnique, France.07/2025: Talk on Controllability of Dynamic Generative Models with Theoretical Groundings at Columbia University, New York City, USA.

04/2025: Talk on A Sustainable Vision for GenAI through the Lens of Dynamic Generative Models at Imperial College London, London, UK.

02/2025: Spotlight talk on Generative AI beyond Scaling at Princeton AI lab, Princeton, USA.

02/2025: Lightning talk on Generative Dynamics for Image Controlling and Astrophysical Modeling at NYC Computer Vision Day, New York City, USA.

11/2024: Talk on A Sustainable Vision for GenAI through the Lens of Dynamic Generative Models at Yale Univeristy, New Haven, USA.

10/2024: Talk on A Sustainable Vision for GenAI through the Lens of Dynamic Generative Models at TMU and LMU, Munich, Germany.

08/2024: Talk on Taming Multimodal Generations via Fundamental Inspirations from Mathematics and Physics at TTIC Summer Workshop on Multimodal Artificial Intelligence, Chicago, USA.

04/2024: Talk on Mining the Latent: A Tuning-Free Paradigm for Versatile Applications with Diffusion Models at New York Univeristy, New York City, USA.

Service

Workshop Organizers- ReGenAI: Responsible Generative AI Workshops at CVPR 2024 / 2025

CV4Science: Computer Vision for Science Workshops at CVPR 2025 / 2026

- Machine Learning and Statistics: NeurIPS 2023 - 2025 (Top Reviewers Award 2024), ICLR 2024 - 2026, ICML 2023-2026, AISTATS 2025-2026, AAAI 2023-2024

Computer Vision and Graphics: CVPR 2022-2026, ECCV 2022-2026, ICCV 2023-2025, WACV 2023-2024, ACM-MM 2021-2022, SIGGRAPH 2024

- Transactions on Machine Learning Research (TMLR), IEEE Transactions on Image Processing (TIP), IEEE Transactions on Multimedia (TMM), Computer Vision and Image Understanding (CUIV)

Say Hi !

Thanks for your interest in getting in touch! You can reach me at: ye[dot]zhu[at]polytechnique[dot]edu

Before reaching out, please kindly take a moment to read the notes below to help keep our communication efficient. I usually receive a high volume of emails and messages, so replies may take a few days or sometimes longer. Thank you for your patience and understanding. Also, it is preferable to write me in English!

For prospective Ph.D. students:I am not currently hiring PhD students. However, new openings may become available. Please check back regularly for updates.

For prospective M.S. students and research/visiting interns:There is no need to contact me directly if you are applying to the M.S. programs at École Polytechnique (EP) or Institut Polytechnique de Paris (IPP). Please refer to the official program websites for detailed information on application procedures and deadlines. An example is the new LLGA MSC&T master's program, which I am co-directing this year.

At the moment, I have limited availability to supervise external visiting students or research interns.

For outreach and other service:I occasionally give talks and regularly organize workshops at ML/CV venues such as NeurIPS and CVPR. Workshop talks are generally easier to accommodate if I plan to attend the conference in person. Other outreach activities may depend on my availability during the semester and are subject to my teaching schedule. Please feel free to reach out with details and expectations.